What are pixels exactly?

When talking about colors, designers and developers need a convention called color space to be able to talk to each other. Indeed, designers have spread the practice of uniquely naming the colors (e.g. "Nostalgia Rose", "Cloud Dancer ", "Cocoon" from Pantone), whereas developers are using hexadecimal codes. Some know the color just by looking at the numbers of the hexadecimal code.

Hex codes follow the RGB color space (Red Green Blue). For example, the main accent color of our brand, the purple of TeleportHQ is represented as #822CEC, meaning Red 53%, Green 23% and Blue 86%. Other color space conventions exist, like HSL (hue, saturation, lightness) and HSV (hue, saturation, value). These can be better suited for specific cases.

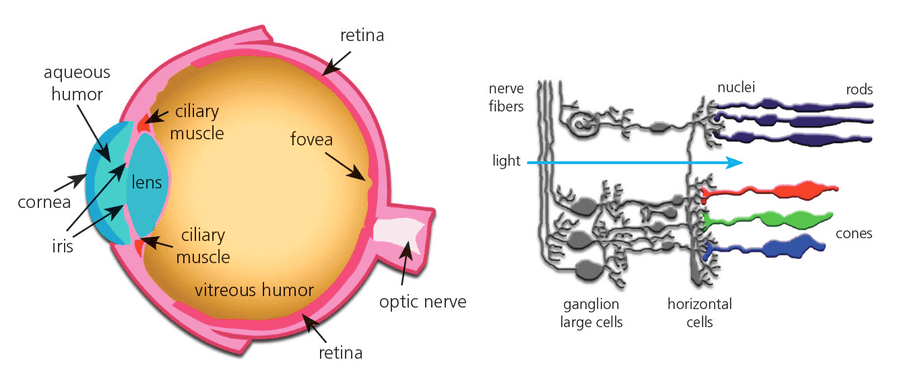

The RGB color space is inspired from the way our own eyes perceive the colors around us when cone cells measure the intensity ( brightness) of the light at different energy levels ( color). Those cells will then transfer that information to the rest of our brain and allow us to perceive our environment. Note that some animals do not have a trichromatic color vision: marine mammals are monochromats, most reptiles are tetrachromats, with respectively one and four cone cells allowing them to see 100 million colors, where most humans can ‘only’ see 10 million [ref wikipedia].

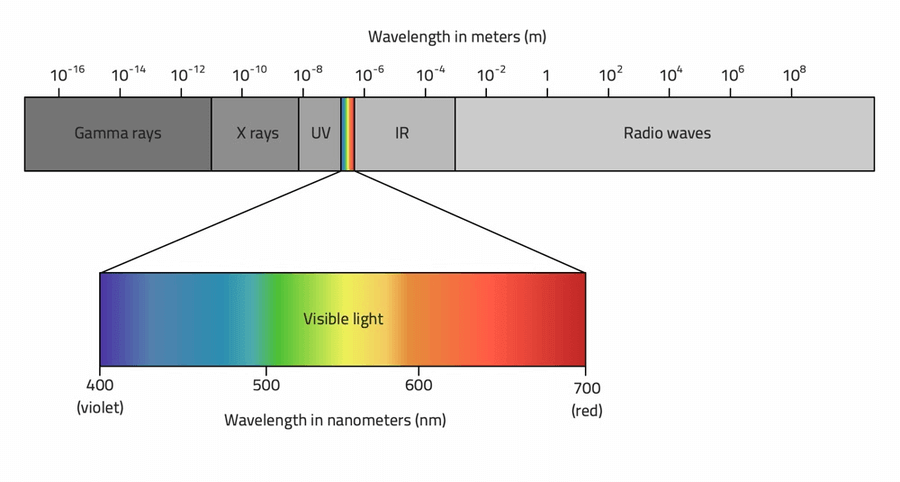

Those notions of intensity and energy come from physics. Light is composed of photons with different energy and the human brain is only able to perceive light between a certain range of wavelengths. Higher energetic photons belong to the ultra-violet domain (which burns our skin) and lower energetic photons belong to the infra-red domain (our body heat emits such photons and can be seen by infra-red cameras).

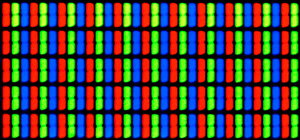

In a computer screen, each pixel is composed of three small LEDs: red, green and blue. To render those colors, the computer will adjust the intensity of the light that is emitted by each LED and our brain will do the processing.

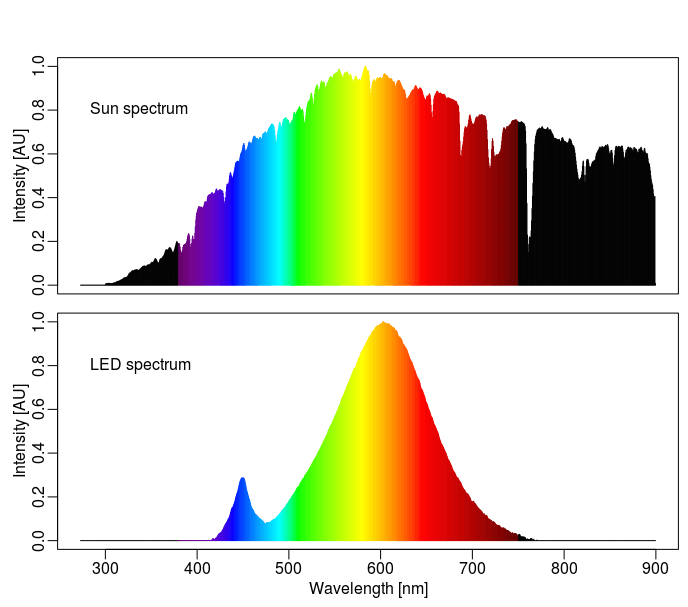

The resulting light is meant to reproduce what we see in nature, but there is a catch. The color blue is not as abundant in nature as it is on a computer screen and the color white, for example, is quite different from the color of the sun. This is the reason why blue light is so tiring for our human eyes and why yellow filters and light mode filters are quite useful for those spending a lot of time in front of their screens.

Machine learning excels with images

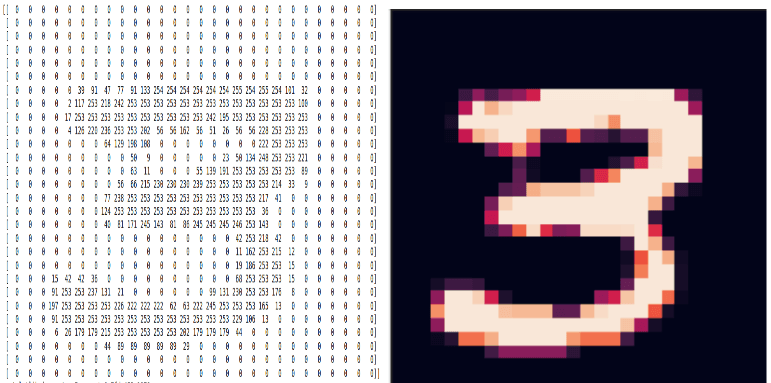

When it comes to image analysis, machine learning systems are not looking at hexadecimal codes or color names. Instead, they look at the pixel values in a similar way our eyes are looking at those same pixels. For the machine, the following two images are the same:

In order to work with images, machine learning is using an interesting trick: it scans the images by looking at patches of pixels instead of looking at independent pixels. This allows the detection of more complex patterns and greatly improves the performance of the detection algorithm.Those specific systems are called convolutional layers. By stacking multiple such layers together (the famous deep learning), we can train models that are able to learn more abstract and high level features. Even though they were invented in the ‘90s [ref], the computing power and large dataset required for them to shine became available only in 2012.[ref]

However, even after such a long time, it is still not fully understood why neural networks give such good results [ref].One of the best examples is coming from a group of researchers who demonstrated that it was possible to trick the algorithm into thinking that turtle shells were rifles [ref].One explanation for why this happened could be that the neural network focuses more on the texture of small patches of pixels than on the big picture itself, which contradicts what was said a few paragraphs ago about those algorithms being able to learn abstract features.

What about screenshots?

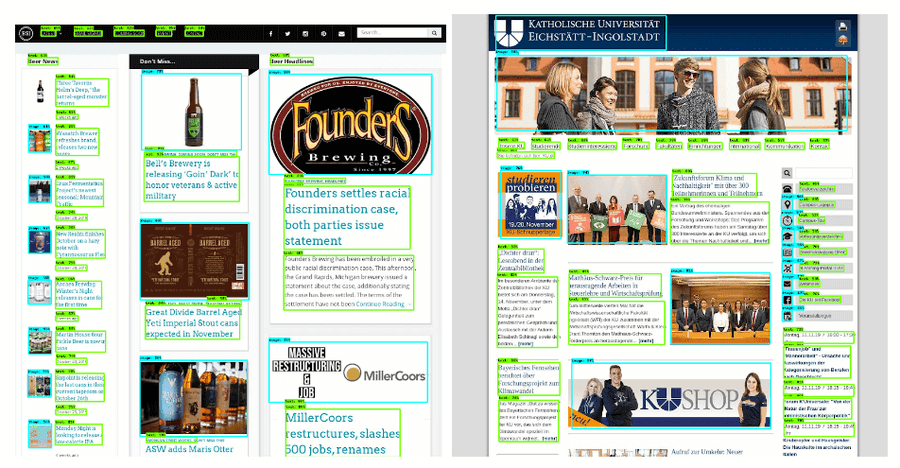

This brings us to the difference between screenshots and photography. In photography, images contain very fine gradients that help the model extract the information from the picture and make valuable decisions. In a screenshot, those gradients are much less present or, quite often, totally absent given the monochrome parts of the image, leaving very little information for the model to extract.

Those algorithms are still valuable, but not for all types of problems. For example, at TeleportHQ we perform several types of machine learning tasks along our pipeline of code-generation from wireframes or high definition designs. On one hand, we obtain good results via object detection to detect individual widgets in a screenshot while, on the other hand, when trying to classify mobile app screenshots into different types of screens (gallery, popup, menu, forms), the model is not able to learn the high level concepts and a more simple decision tree proved to perform better. We will publish more more information about those two specific projects soon.

Conclusion

It is yet unsure if this difference between screenshots and real life images is really impacting the performance of deep neural networks. However, the difference is flagrant when looking at the 3D view of the pixels themselves: it’s almost like comparing a city landscape versus a countryside landscape.

The ML community has accepted deep learning as the unique champion of computer vision, but in the case of web and mobile application screenshots, we believe that other techniques may still have a chance.

Currently, we’re exploring a hybrid approach which mixes the results of both computer vision and Natural Language Processing techniques applied to the same data source of HTML strings and their respective DOM representation/rendered computed data, an approach about which we will discuss in a soon to be published blog post.

As always, we’re extremely interested in exchanging with other enthusiasts regarding this topic. If you’re interested in our research, please feel free to contact us through any of our social media channels found in the footer of this website.